To give a wartime example, let’s look at what occurred during the early stages of Russia’s invasion of Ukraine. To distinguish a threat to national security, it comes down to understanding the creator’s intentions.

Threats to national security are less frequent, though in theory they may occur in peacetime or war. Real people are losing money to deepfake-enabled fraud online. Whether deepfake fraud presents itself as the Elon Musk video mentioned earlier or the phone call described above, the result is the same. The employee wired $243,000 to the cybercriminal before realizing his mistake.

When the fake “CEO” called an employee to wire money, his slight German accent and voice cadence matched perfectly. For example, a recent scheme utilized artificially generated audio to match an energy company CEO’s voice. Bad actors have also used this technique to threaten and intimidate journalists, politicians, and other semi-public figures.įurthermore, cyber criminals use deepfake technology to conduct online fraud. Often, they inflict psychological harm on the victim, reduce employability, and affect relationships. Although counterfeit, these forged pornographic videos have real consequences.

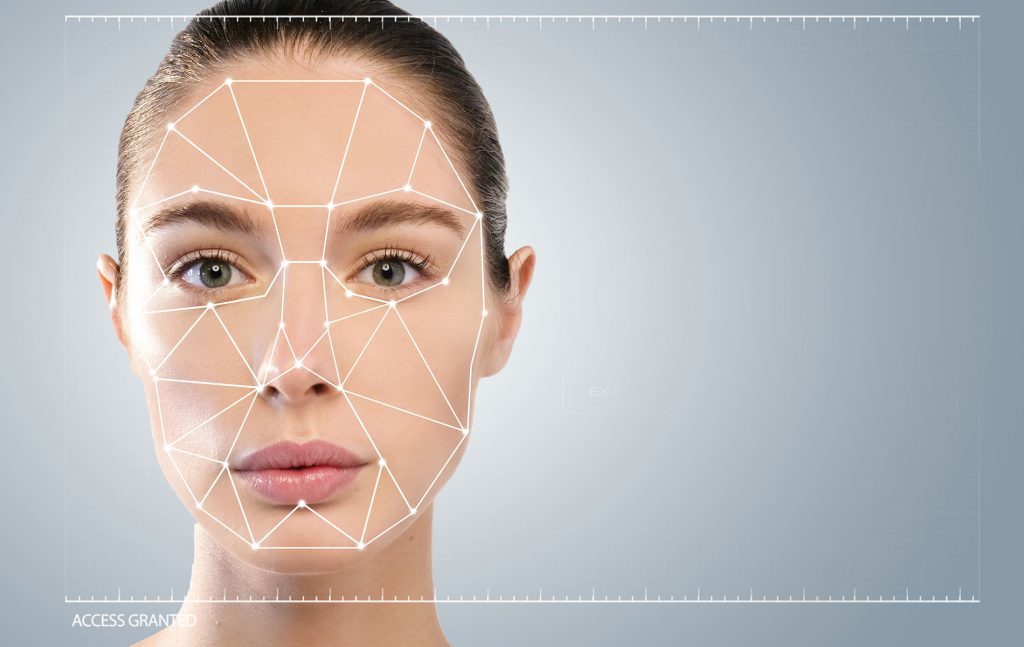

Usually, these videos contain the false likeness of celebrity women. This user introduced the technology to the mainstream through the creation and sharing of fabricated pornographic videos. In fact, the term “deepfake” originated from a Reddit user with the same username. The vast majority of threats to the individual are related to nonconsensual pornography. Solutions to the deepfake problem will likely differ between the two categories as governments and social media platforms weigh its ultimate impact. However, differences appear when thinking through the implications of misuse. They also provoke similar societal responses. Both types of threats use the same communication vector and the same technology. It has yet to be seen if social media giants and governments will holistically address the misuse of deepfake technology, however some efforts are underway.ĭeepfakes are potentially threatening to the individual and to the state. Additionally, academics and policymakers show varying levels of concern for how deepfakes could harm society. As the technology progresses, people will likely continue to use it for reputation tarnishing, financial gain, and for harming state security. Although deepfake technology is relatively primitive, bad actors have increasingly used it for malicious purposes. Often, these include creating an image of a person that does not exist, creating a video of someone saying or doing something they have never done, or synthesizing a person’s voice in an audio file. Deepfakes, fabricated videos which imitate the likeness of an individual, can take on many forms. Unfortunately for them, the video was not real, it was a deepfake. This situation occurred recently, and a small number of investors jumped at the opportunity after seeing the interview clip of Elon Musk. After all, you’ve heard stories from friends who have made money from Musk’s other endorsements. All you need to do is transfer funds to a crypto wallet and the returns will be guaranteed. Businessman turned celebrity Elon Musk is promoting a new cryptocurrency investment. Imagine scrolling through your favorite social media feed when something catches your eye-a short video clip of a familiar face.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed